The Productivity Illusion

Here’s a number that should terrify every engineering leader: 95% of developers using AI coding assistants report feeling more productive. Sounds great, right?

Now here’s the punchline: a rigorous randomized controlled trial by METR found that those same developers were actually 19% slower on real-world tasks. Not faster. Slower.

Welcome to the greatest productivity illusion in software engineering history.

As a Technical Lead who’s been reviewing AI-assisted PRs for over two years, I’ve watched this pattern unfold in real-time. The developer commits 200 lines in an hour, feels like a superhero, and the PR looks clean at first glance. But three sprints later, that module is the source of half our bug tickets. The code works. It just doesn’t make sense.

This isn’t a theoretical concern. This is a $4 billion problem that’s already here.

What Exactly Is “Vibe Coding”?

The term was coined by Andrej Karpathy in February 2025:

“There’s a new kind of coding I call ‘vibe coding’, where you fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

In practice, vibe coding means:

- Accepting AI suggestions without reading them

- Prompting until the tests pass, then moving on

- Treating the LLM as a black box that produces “working” code

- Never building a mental model of what was generated

It’s the difference between driving a car and being a passenger who occasionally shouts directions. Both get you somewhere. Only one lets you handle the unexpected.

The Data: 3x Debt Acceleration

Let’s look at what the research actually shows.

Bug Amplification

| Study | Finding | Year |

|---|---|---|

| Uplevel (2,600 devs) | 41% more bugs after Copilot adoption | 2024 |

| CodeRabbit (4M PRs) | 1.7x more “major” issues in AI co-authored code | 2025 |

| ICSE 2026 | Technical debt accumulates at 3x the normal rate | 2026 |

| GitClear (150M LOC) | Code churn doubled — code rewritten within 2 weeks | 2024 |

The Comprehension Debt Problem

Addy Osmani (Chrome engineering lead at Google) introduced a concept that perfectly captures the core issue: comprehension debt.

Traditional technical debt is code you wrote but cut corners on. Comprehension debt is code that exists in your codebase but nobody understands. Not the person who prompted it. Not their teammates. Not even the AI that generated it next week, because context windows don’t persist.

“AI doesn’t just write code you don’t understand. It writes code that can’t be understood without the original prompt context, which was never saved.” — Osmani, O’Reilly 2025

This is fundamentally different from traditional tech debt. You can’t refactor what you can’t comprehend. You can only rewrite it.

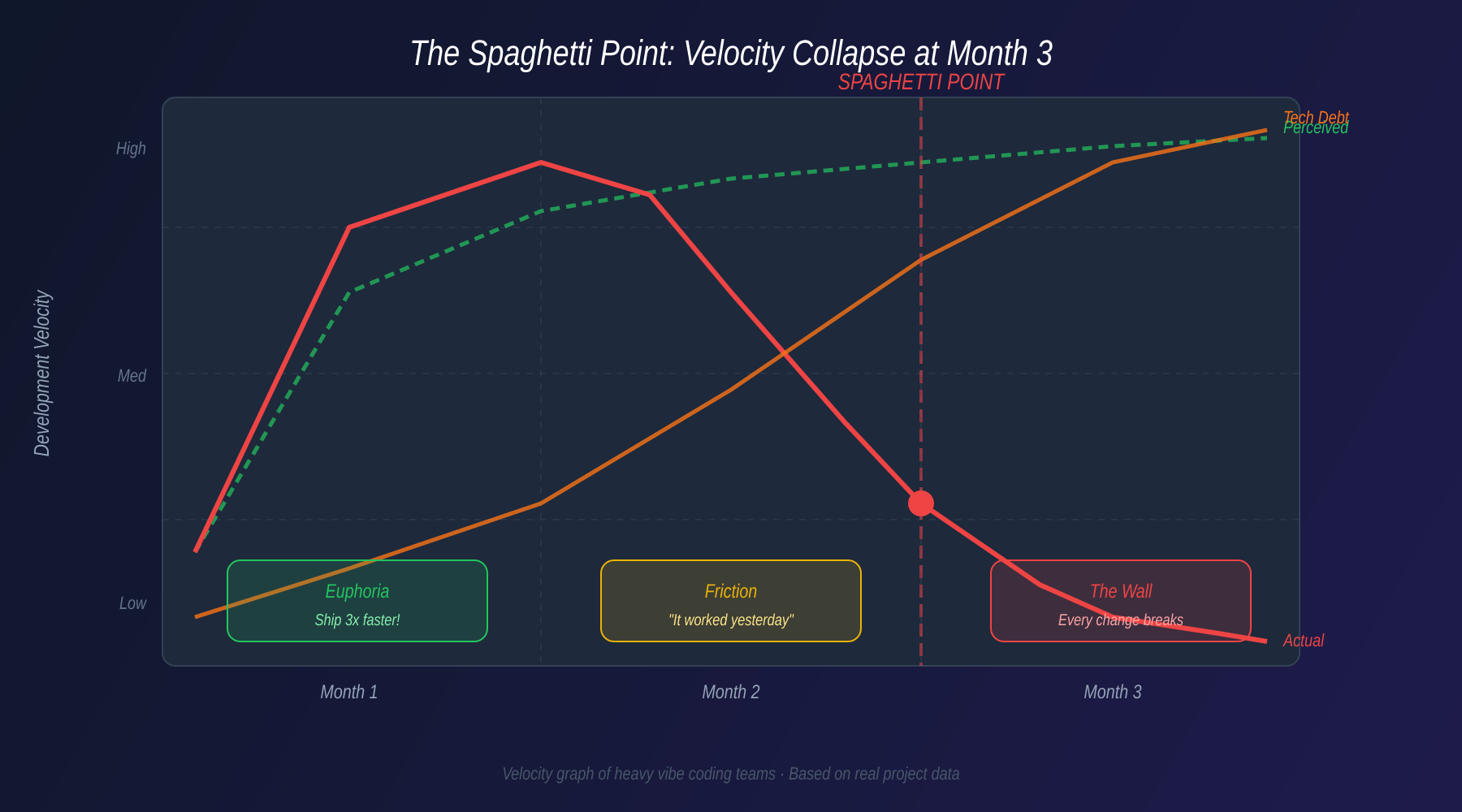

The Spaghetti Point

Teams I’ve worked with hit what I call the Spaghetti Point at approximately month 3 of heavy vibe coding:

- Month 1: Euphoria. Shipping 3x faster. Features flying out the door.

- Month 2: Friction. Strange bugs. “It worked yesterday.” Integration issues.

- Month 3: The Wall. Every change breaks something else. New features take longer than they would have without AI. The team starts avoiding certain modules.

The velocity graph looks like a hockey stick — but upside down.

Real-World Disasters

Amazon’s AI-Assisted Outage (March 2026)

The most expensive vibe coding incident to date: Amazon’s checkout system experienced a 6-hour global outage caused by AI-assisted code that passed all tests but contained a subtle race condition in the payment processing pipeline.

- 6.3 million lost orders

- Estimated $180M+ in lost revenue

- Root cause: AI-generated retry logic that looked correct but created a thundering herd problem under load

The code was clean. The tests were green. The architecture was wrong.

Moltbook: 1.5 Million API Keys Leaked

A fintech startup shipped AI-generated code that hardcoded API keys in a configuration module. The AI had been trained on repositories where this was common practice. Within 3 days of deployment:

- 1.5 million API keys exposed

- Full database access compromised

- Company folded within 6 months

The 8,000 Startup Graveyard

According to a 2026 industry analysis, over 8,000 AI-first startups built primarily through vibe coding now require complete or near-complete rebuilds. The estimated cleanup cost: $400 million to $4 billion.

These aren’t failed companies. Many have paying customers and revenue. They just can’t maintain what they built. Every bug fix introduces two new bugs. Every feature takes 5x longer than it should.

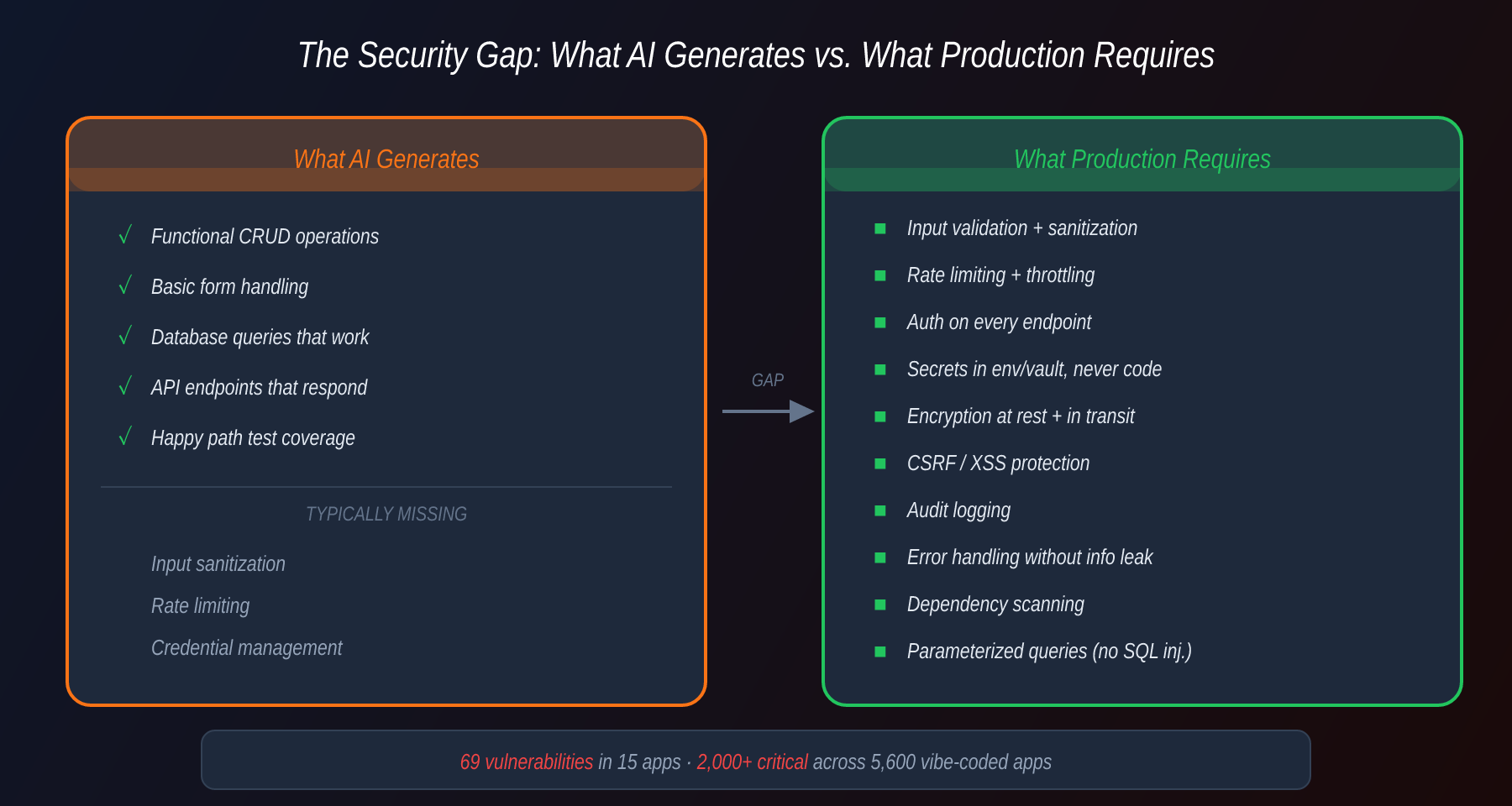

The Security Time Bomb

Security is where vibe coding gets genuinely dangerous.

A systematic audit of 15 vibe-coded applications found 69 vulnerabilities, including:

- SQL injection in 11 of 15 apps

- Hardcoded credentials in 8 of 15

- Missing authentication on admin endpoints in 6 of 15

- Cross-site scripting in 13 of 15

A broader study across 5,600 vibe-coded applications identified over 2,000 critical vulnerabilities.

The pattern is always the same: the AI generates code that functions correctly but ignores security best practices. It doesn’t add rate limiting because you didn’t ask for it. It doesn’t sanitize inputs because the prompt said “build a form.” It doesn’t encrypt at rest because the happy path doesn’t require it.

Why AI Gets Security Wrong

LLMs are trained on the average of all code ever written. The average code has mediocre security. Therefore, AI generates mediocrely secure code — and developers who are vibe coding don’t know enough to catch what’s missing.

This creates what I call the competence paradox: the developers most likely to vibe code (junior or AI-native) are the least equipped to evaluate the security implications of what they accept.

Gartner’s 2,500% Warning

Gartner projects a 2,500% increase in AI-generated code defects by 2028 if current adoption patterns continue without corresponding quality practices. That’s not a typo. Twenty-five hundred percent.

Their model accounts for:

- Increasing AI adoption rates (currently ~75% of professional developers)

- Declining code review thoroughness (“AI wrote it, it’s probably fine”)

- Compounding debt from AI-modifying-AI-generated code

- Reduced developer comprehension of their own codebases

The scariest part? We’re only at the beginning of the curve.

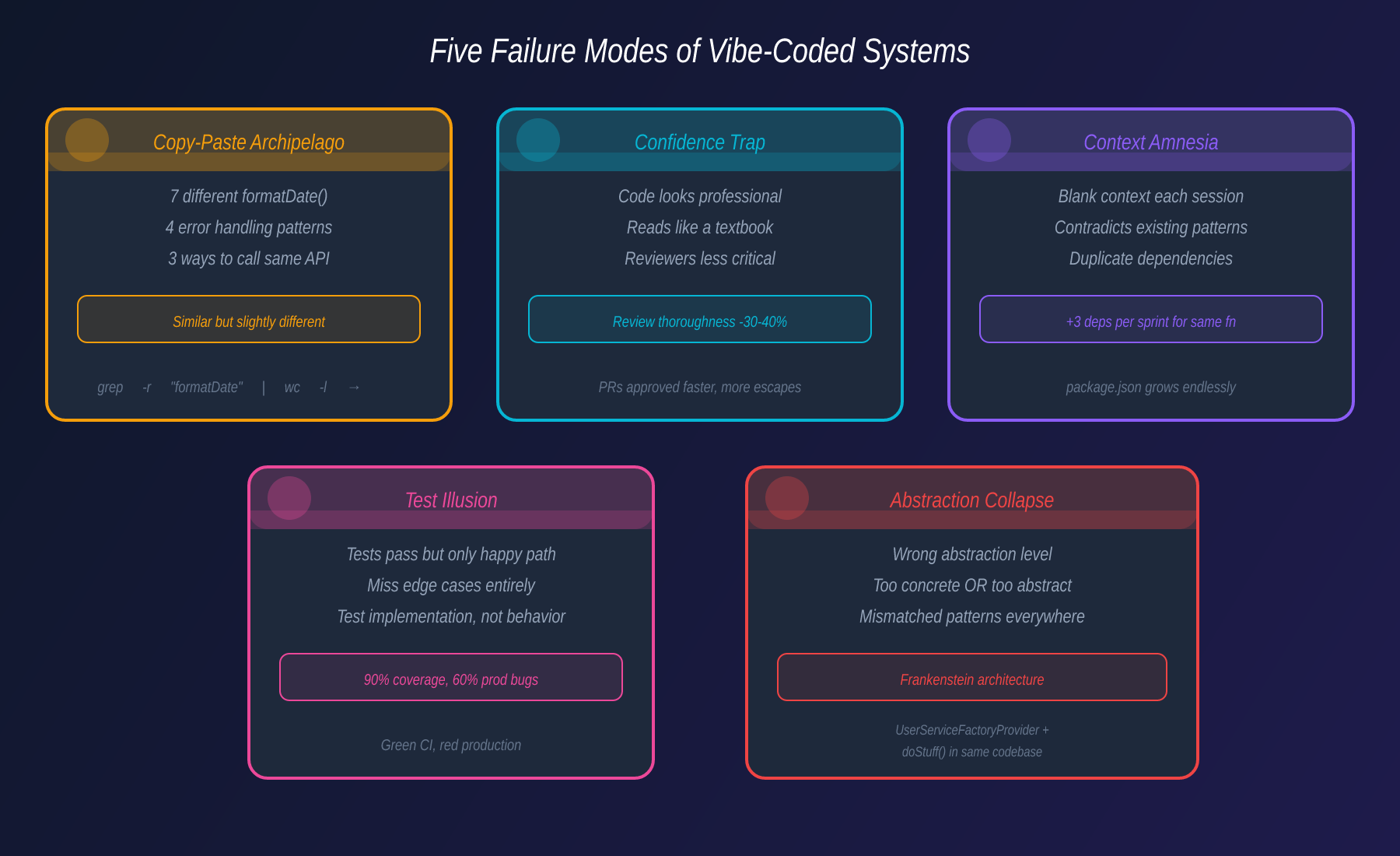

The Five Failure Modes

After reviewing hundreds of AI-assisted codebases, I’ve categorized the failures into five distinct patterns:

1. The Copy-Paste Archipelago

AI generates similar but slightly different solutions for the same problem in different parts of the codebase. You end up with 7 different date formatting functions, 4 different error handling patterns, and 3 different ways to call the same API.

Symptom: grep -r "formatDate" src/ | wc -l returns a number that makes you weep.

2. The Confidence Trap

AI-generated code is syntactically perfect and reads like a textbook. This makes reviewers less critical. Studies show code review thoroughness drops 30-40% for AI-generated code because it “looks professional.”

Symptom: PRs get approved faster, but defect escape rate increases.

3. The Context Amnesia

Each AI interaction starts with a blank context window. The model doesn’t remember the architectural decisions from yesterday’s session. So it generates code that contradicts existing patterns, uses different libraries for the same purpose, or re-implements utilities that already exist.

Symptom: package.json grows three new dependencies per sprint for functionality you already had.

4. The Test Illusion

AI is exceptionally good at writing tests that pass. It’s also exceptionally good at writing tests that only test the happy path, miss edge cases, and verify implementation details instead of behavior.

Symptom: 90% code coverage. 60% of bugs found in production.

5. The Abstraction Collapse

AI tends to solve problems at the wrong level of abstraction — either too concrete (hardcoded values, copy-pasted logic) or too abstract (enterprise patterns for a 200-line script). Over time, the codebase becomes a Frankenstein of mismatched abstractions.

Symptom: A UserServiceFactoryProvider next to a function called doStuff().

What “Rescue Engineering” Looks Like

“Rescue engineering” is emerging as the hottest engineering discipline of 2026. These are the teams called in to save vibe-coded products that have hit the Spaghetti Point.

The typical rescue engagement:

Phase 1: Archaeology (Week 1-2)

- Map the actual architecture (it won’t match any documentation)

- Identify dead code (usually 30-50% of the codebase)

- Find the real dependencies (not what’s in package.json)

- Catalog security vulnerabilities

Phase 2: Triage (Week 3-4)

- Classify modules as: keep, rewrite, or delete

- Establish a testing baseline

- Set up CI/CD that actually catches problems

- Document what the code actually does vs. what it should do

Phase 3: Rebuild (Month 2-6)

- Strangler fig pattern: wrap old modules, replace incrementally

- Write comprehension tests (tests that verify why, not just what)

- Introduce architectural decision records (ADRs)

- Train the team to review AI code as critically as human code

The cost? 3-10x what it would have cost to build it right the first time.

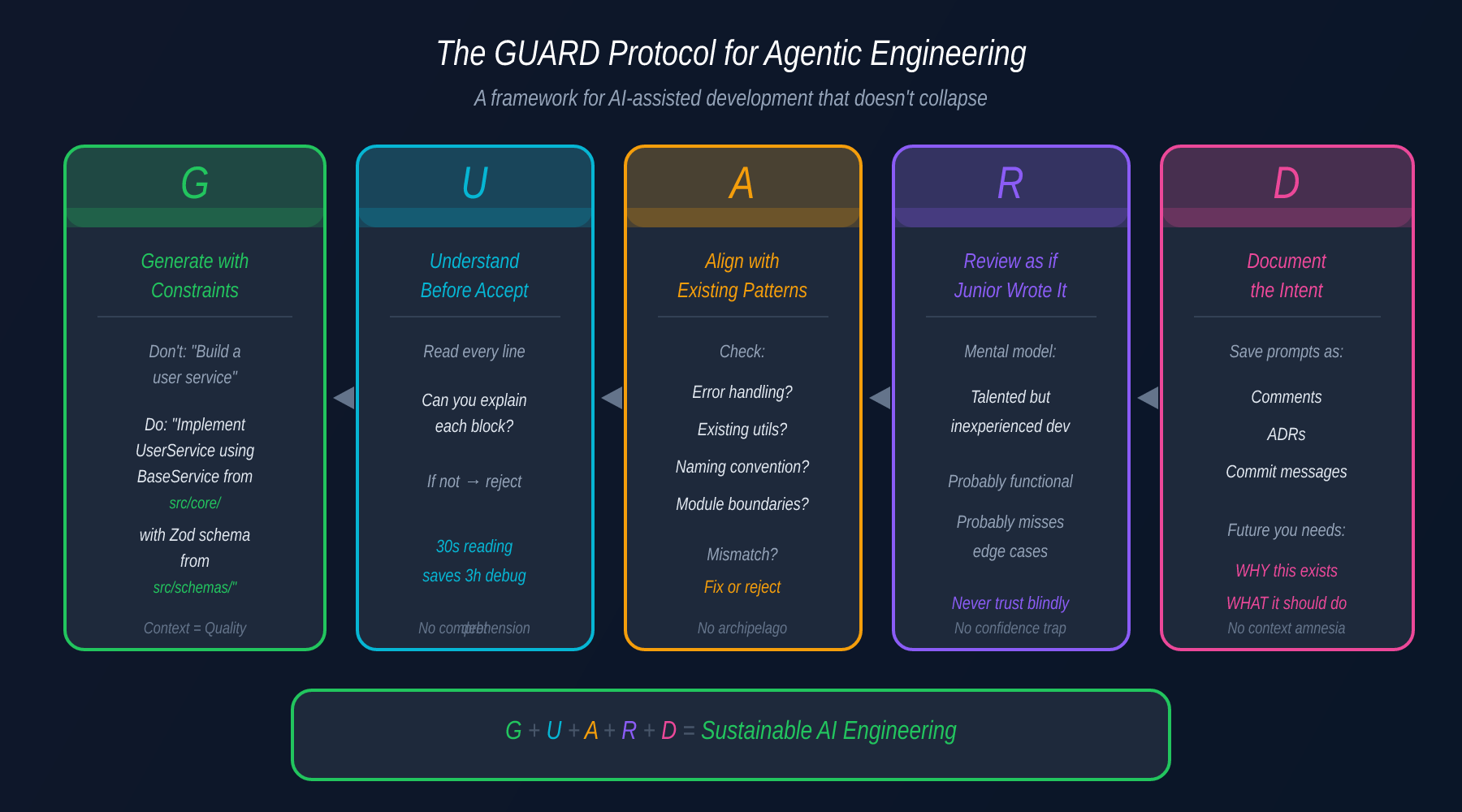

The Right Way: Agentic Engineering

The alternative to vibe coding isn’t rejecting AI — it’s agentic engineering: treating AI as a powerful tool within a disciplined engineering process.

Here’s my framework for AI-assisted development that doesn’t collapse:

The GUARD Protocol

G — Generate with constraints

Don’t prompt “build me a user service.” Prompt with your architecture: “Implement a UserService following our repository pattern in src/services/, using the BaseService class from src/core/, with Zod validation matching the schema in src/schemas/user.ts.”

U — Understand before accepting Read every line. If you can’t explain what a block does, you can’t maintain it. The 30 seconds you spend reading saves 3 hours of debugging later.

A — Align with existing patterns Before accepting generated code, verify: Does it follow our error handling pattern? Does it use our existing utilities? Does it match our naming conventions? If not, either fix it or reject it.

R — Review as if a junior wrote it The most effective mental model: treat AI output as a submission from a talented but inexperienced developer. It’s probably functional. It probably misses edge cases. It definitely doesn’t know your system’s constraints.

D — Document the intent Save your prompts as comments or ADRs. Future you (or your replacement) needs to understand why this code exists and what it was supposed to do, not just what it does.

Architecture-First AI

The teams I’ve seen succeed with AI follow this pattern:

- Humans design the architecture — module boundaries, data flow, API contracts

- AI implements within boundaries — individual functions, CRUD operations, boilerplate

- Humans review for coherence — does this fit? Does it match our patterns?

- AI writes tests — but humans write the test specifications

- Humans own the integration — wiring modules together, handling edge cases

The key insight: AI is excellent at local optimization and terrible at global coherence. Use it accordingly.

Metrics That Matter

If you’re a tech lead trying to measure whether your team is vibe coding or engineering with AI, track these:

| Metric | Healthy | Warning | Danger |

|---|---|---|---|

| Code churn (rewritten within 14 days) | < 5% | 5-15% | > 15% |

| PR review time (AI-generated) | Same as human | 30%+ faster | 50%+ faster |

| Dependency growth per sprint | 0-1 new | 2-3 new | 4+ new |

| Bug escape rate to production | < 5% | 5-15% | > 15% |

| Dead code percentage | < 10% | 10-25% | > 25% |

| “I don’t know what this does” in retros | Never | Sometimes | Frequently |

The last metric is the most important. If your team can’t explain their own code, you’ve already crossed the line from engineering to vibing.

The Bottom Line

Vibe coding isn’t going away. AI coding assistants aren’t going away. The question isn’t whether to use AI — it’s whether you’re engineering with AI or gambling with AI.

The difference:

| Vibe Coding | Agentic Engineering |

|---|---|

| ”It works, ship it" | "It works, but why does it work?” |

| Accept and move on | Accept, understand, and integrate |

| AI drives architecture | Humans drive architecture, AI implements |

| Tests prove it compiles | Tests prove it’s correct |

| Speed is the metric | Sustainability is the metric |

Every line of AI-generated code you don’t understand is a liability on your balance sheet. It’s not technical debt — it’s technical mortgage, and the interest rate is compounding daily.

The teams that thrive in 2026 won’t be the ones that code fastest. They’ll be the ones that understand what they ship.

If your team is drowning in vibe-coded technical debt, start with the GUARD protocol. It won’t fix everything overnight, but it’ll stop the bleeding. The alternative is becoming one of those 8,000 startups on the rescue engineering waiting list.

— A Technical Lead who’s seen enough “AI-generated” incident reports for one lifetime.